2026

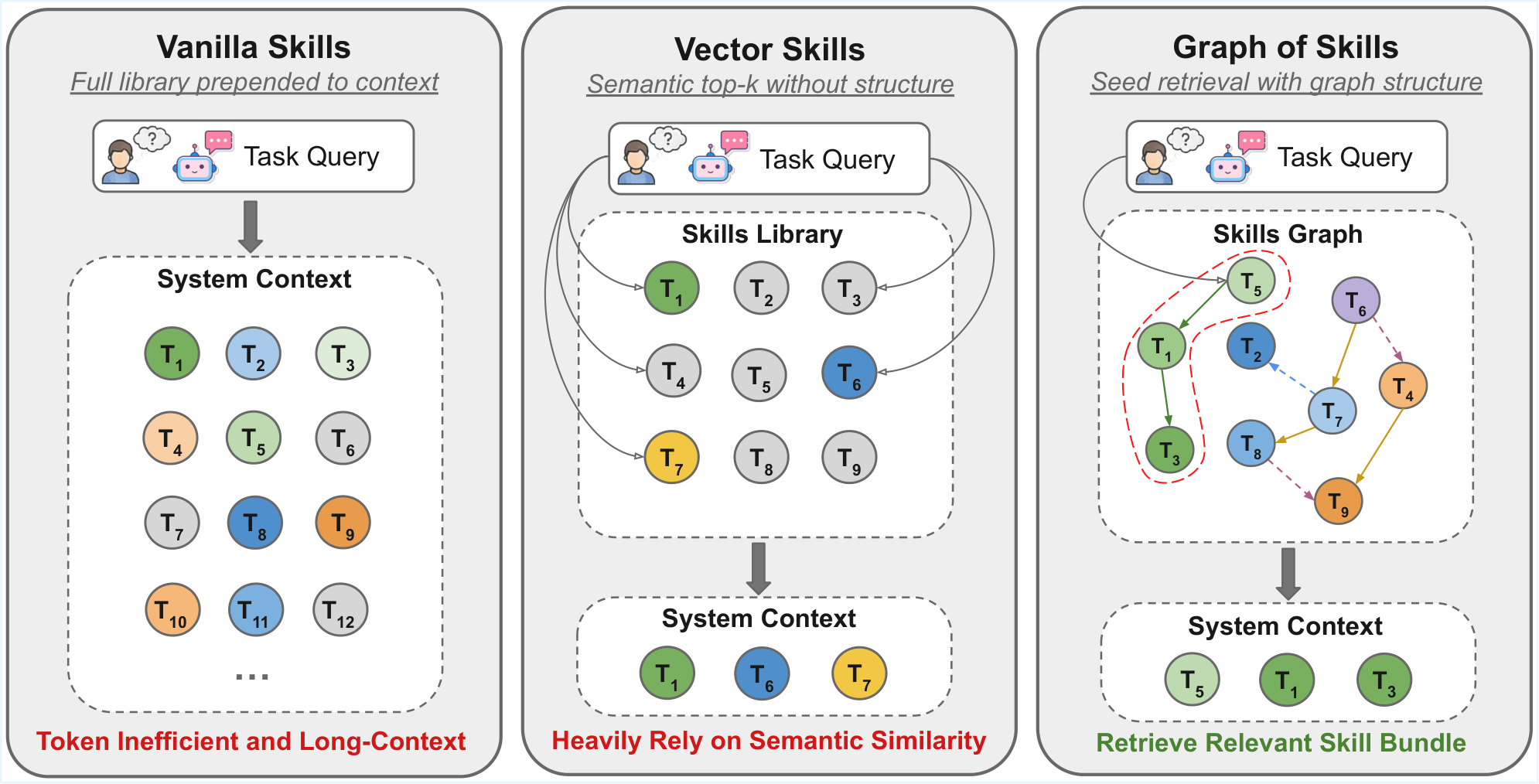

Graph of Skills: Dependency-Aware Structural Retrieval for Massive Agent Skills

Dawei Liu*, Zongxia Li*, Hongyang Du, Xiyang Wu, Shihang Gui, Yongbei Kuang, Lichao Sun (* equal contribution)

Preprint 2026

TL;DR: Graph of Skills (GoS): offline skill dependency graph + inference-time retrieval (seeding + PageRank + token budget) so agents load a small, relevant skill bundle instead of the whole library—higher reward, fewer tokens.

Graph of Skills: Dependency-Aware Structural Retrieval for Massive Agent Skills

Dawei Liu*, Zongxia Li*, Hongyang Du, Xiyang Wu, Shihang Gui, Yongbei Kuang, Lichao Sun (* equal contribution)

Preprint 2026

TL;DR: Graph of Skills (GoS): offline skill dependency graph + inference-time retrieval (seeding + PageRank + token budget) so agents load a small, relevant skill bundle instead of the whole library—higher reward, fewer tokens.

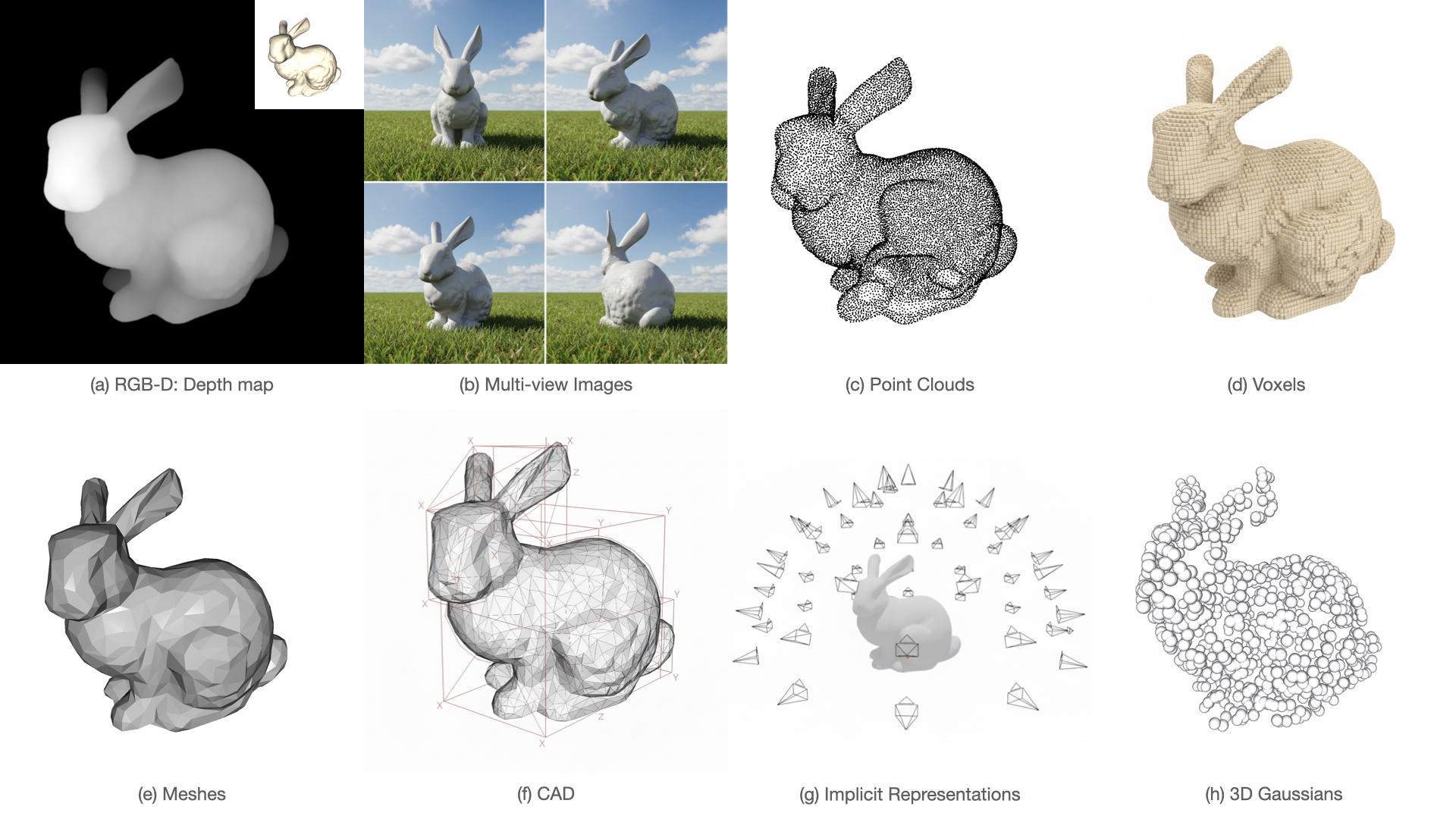

A Cookbook of 3D Vision: Data, Learning Paradigms, and Application

Hongyang Du*, Zongxia Li*, Dawei Liu*, Runhao Li*, Haoyuan Song, Qingyu Zhang, Yubo Wang, Jingcheng Ni, Shihang Gui, Congchao Dong, Tao Hu (* equal contribution)

CVPR Workshop 2026

TL;DR: A data-centric “cookbook” of 3D vision: how representations (points, meshes, Gaussians, etc.), datasets, and learning setups connect to reconstruction, generation, and video / 4D trends.

A Cookbook of 3D Vision: Data, Learning Paradigms, and Application

Hongyang Du*, Zongxia Li*, Dawei Liu*, Runhao Li*, Haoyuan Song, Qingyu Zhang, Yubo Wang, Jingcheng Ni, Shihang Gui, Congchao Dong, Tao Hu (* equal contribution)

CVPR Workshop 2026

TL;DR: A data-centric “cookbook” of 3D vision: how representations (points, meshes, Gaussians, etc.), datasets, and learning setups connect to reconstruction, generation, and video / 4D trends.

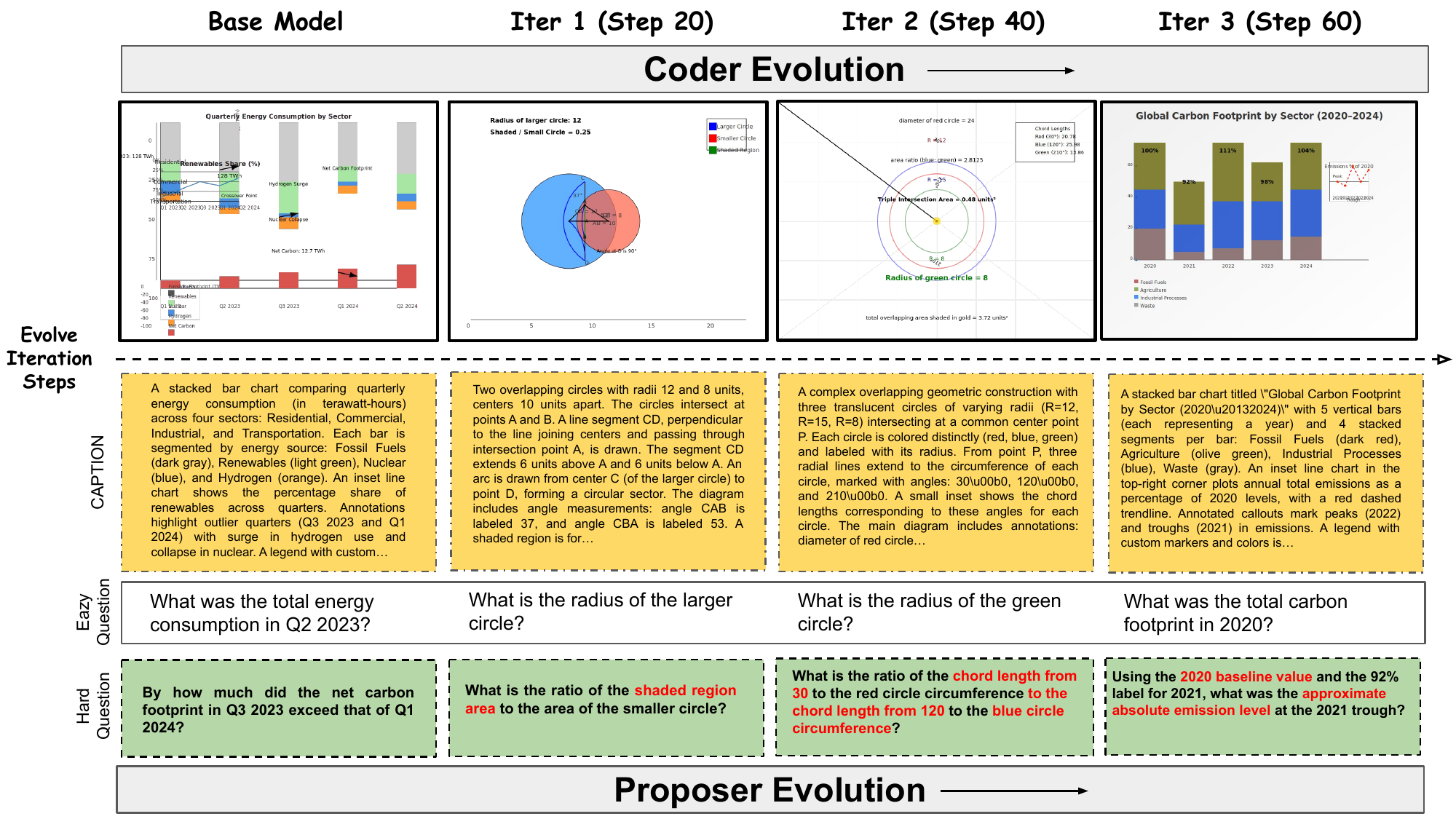

MM-Zero: Self-Evolving Multi-Model Vision Language Models From Zero Data

Zongxia Li*, Hongyang Du*, Chengsong Huang*, Xiyang Wu, Lantao Yu, Yicheng He, Jing Xie, Xiaomin Wu, Zhichao Liu, Jiarui Zhang, Fuxiao Liu (* equal contribution)

Preprint 2026

TL;DR: MM-Zero trains one base VLM in three roles—Proposer, Coder (code-to-image), Solver—with GRPO and rich rewards, achieving self-evolution on multimodal reasoning without any seed images.

MM-Zero: Self-Evolving Multi-Model Vision Language Models From Zero Data

Zongxia Li*, Hongyang Du*, Chengsong Huang*, Xiyang Wu, Lantao Yu, Yicheng He, Jing Xie, Xiaomin Wu, Zhichao Liu, Jiarui Zhang, Fuxiao Liu (* equal contribution)

Preprint 2026

TL;DR: MM-Zero trains one base VLM in three roles—Proposer, Coder (code-to-image), Solver—with GRPO and rich rewards, achieving self-evolution on multimodal reasoning without any seed images.

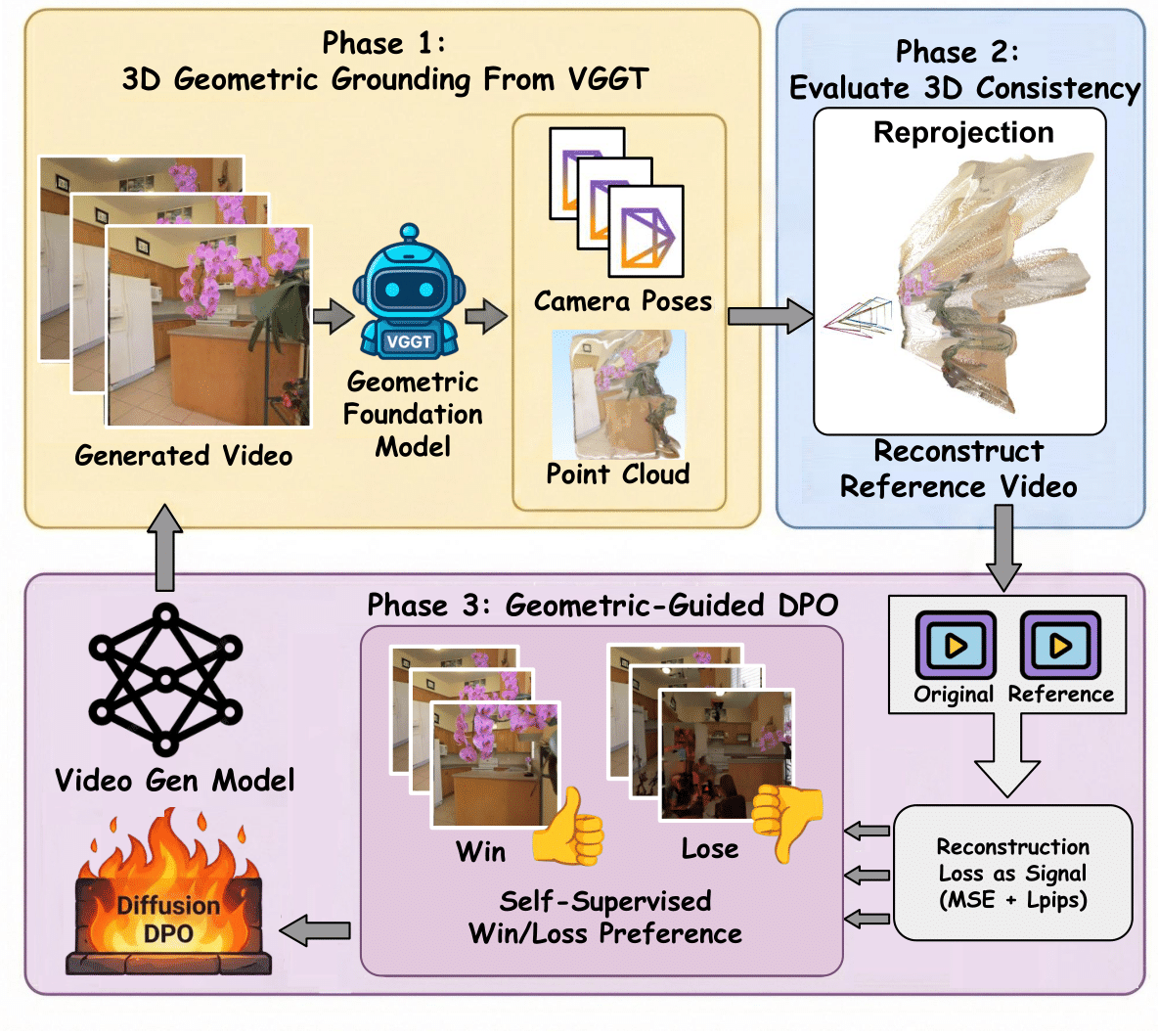

VideoGPA: Distilling Geometry Priors for 3D-Consistent Video Generation

Hongyang Du*, Junjie Ye*, Xiaoyan Cong*, Runhao Li, Jingcheng Ni, Aman Agarwal, Zeqi Zhou, Zekun Li, Randall Balestriero, Yue Wang (* equal contribution)

ICML 2026

TL;DR: VideoGPA uses a geometry foundation model to mine preference pairs for DPO, nudging video diffusion toward 3D-consistent motion without hand-labeled human preferences.

VideoGPA: Distilling Geometry Priors for 3D-Consistent Video Generation

Hongyang Du*, Junjie Ye*, Xiaoyan Cong*, Runhao Li, Jingcheng Ni, Aman Agarwal, Zeqi Zhou, Zekun Li, Randall Balestriero, Yue Wang (* equal contribution)

ICML 2026

TL;DR: VideoGPA uses a geometry foundation model to mine preference pairs for DPO, nudging video diffusion toward 3D-consistent motion without hand-labeled human preferences.

2025

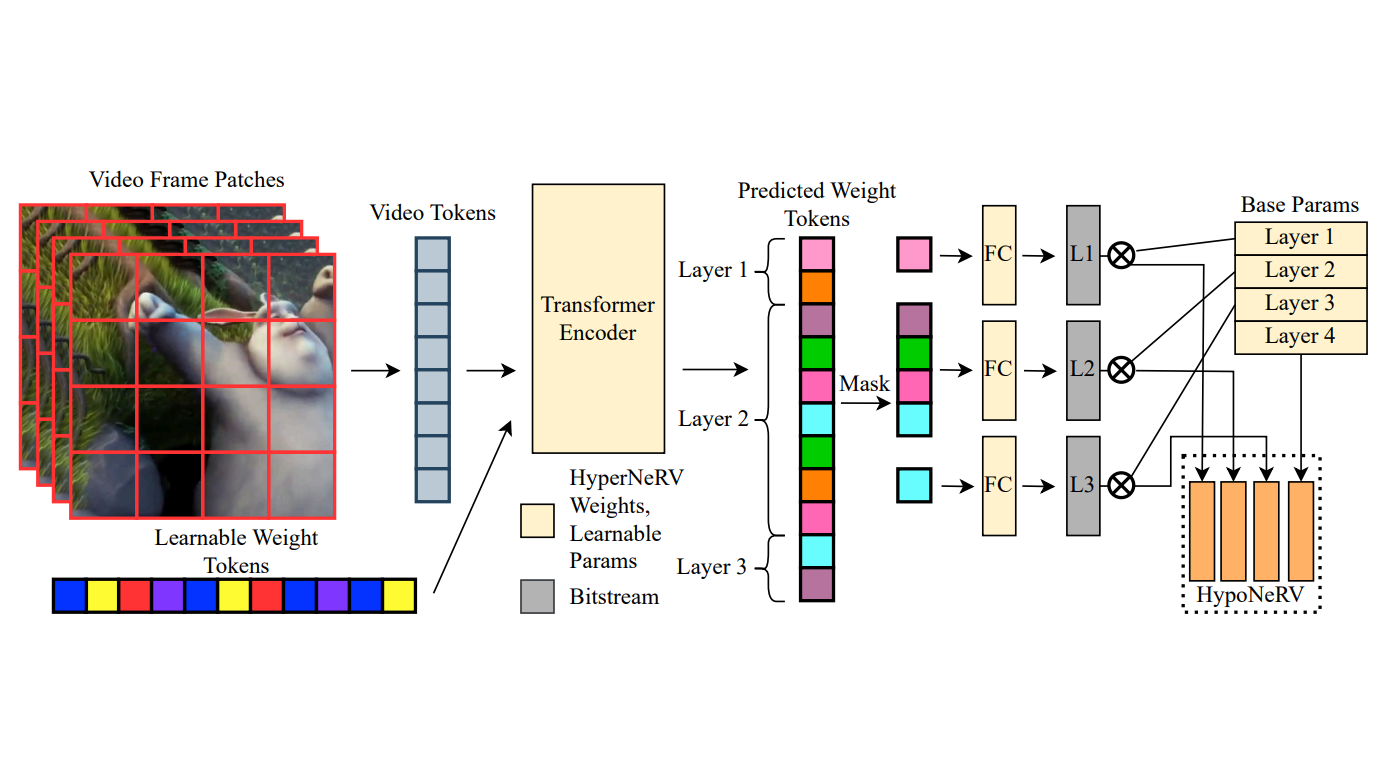

How to Design and Train Your Implicit Neural Representation for Video Compression

Matthew Gwilliam, Roy Zhang, Namitha Padmanabhan, Hongyang Du, Abhinav Shrivastava

WACV 2026

TL;DR: We dissect NeRV-style video INRs in a library, propose Rabbit NeRV (RNeRV) as a strong recipe under equal training budget, then use hyper-networks + weight masking to speed encoding while keeping quality competitive.

How to Design and Train Your Implicit Neural Representation for Video Compression

Matthew Gwilliam, Roy Zhang, Namitha Padmanabhan, Hongyang Du, Abhinav Shrivastava

WACV 2026

TL;DR: We dissect NeRV-style video INRs in a library, propose Rabbit NeRV (RNeRV) as a strong recipe under equal training budget, then use hyper-networks + weight masking to speed encoding while keeping quality competitive.

VideoHallu: Evaluating and Mitigating Multi-modal Hallucinations for Synthetic Videos

Zongxia Li*, Xiyang Wu*, Yubin Qin, Hongyang Du, Guangyao Shi, Dinesh Manocha, Tianyi Zhou, Jordan Lee Boyd-Graber (* equal contribution)

NeurIPSs 2025

TL;DR: VideoHallu: benchmark of synthetic videos (e.g. from frontier generators) with QA that exposes commonsense and physics failures; we show strong MLLMs still struggle and that GRPO fine-tuning (with counterexamples) helps.

VideoHallu: Evaluating and Mitigating Multi-modal Hallucinations for Synthetic Videos

Zongxia Li*, Xiyang Wu*, Yubin Qin, Hongyang Du, Guangyao Shi, Dinesh Manocha, Tianyi Zhou, Jordan Lee Boyd-Graber (* equal contribution)

NeurIPSs 2025

TL;DR: VideoHallu: benchmark of synthetic videos (e.g. from frontier generators) with QA that exposes commonsense and physics failures; we show strong MLLMs still struggle and that GRPO fine-tuning (with counterexamples) helps.

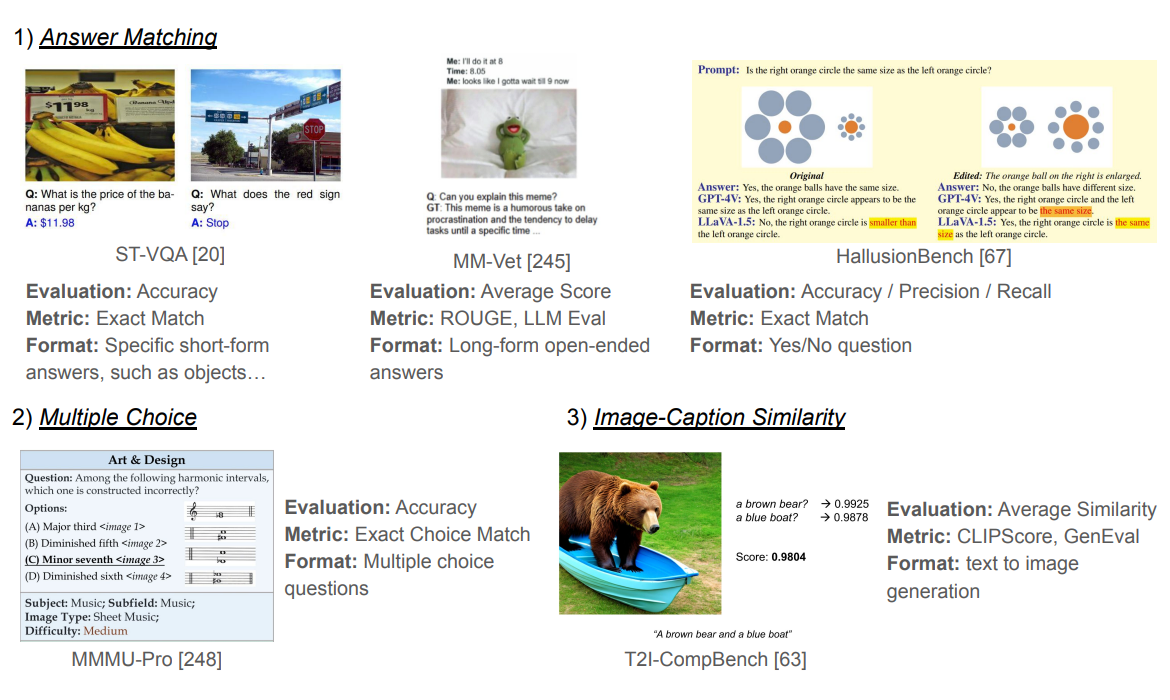

A Survey of State of the Art Large Vision Language Models: Alignment, Benchmark, Evaluations and Challenges

Zongxia Li*, Xiyang Wu*, Hongyang Du, Huy Nghiem, Guangyao Shi (* equal contribution)

CVPR Workshop 2025 Oral

TL;DR: Survey of large VLMs (roughly 2019–2024): model families, training, benchmarks, applications (agents, robotics, video), and open problems like hallucination and safety.

A Survey of State of the Art Large Vision Language Models: Alignment, Benchmark, Evaluations and Challenges

Zongxia Li*, Xiyang Wu*, Hongyang Du, Huy Nghiem, Guangyao Shi (* equal contribution)

CVPR Workshop 2025 Oral

TL;DR: Survey of large VLMs (roughly 2019–2024): model families, training, benchmarks, applications (agents, robotics, video), and open problems like hallucination and safety.

2024

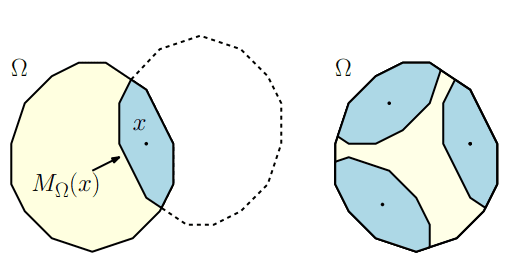

Ipelets for the Convex Polygonal Geometry

Nithin Parepally, Ainesh Chatterjee, Auguste Gezalyan, Hongyang Du, Sukrit Mangla, Kenny Wu, Sarah Hwang, David Mount

40th International Symposium on Computational Geometry (SoCG) 2024

TL;DR: New Ipelets for the Ipe editor that draw and explore convex polygonal geometry—Funk/Hilbert metric balls, polar bodies, enclosing balls, MSTs, and polygon utilities (union, intersection, Minkowski sum).

Ipelets for the Convex Polygonal Geometry

Nithin Parepally, Ainesh Chatterjee, Auguste Gezalyan, Hongyang Du, Sukrit Mangla, Kenny Wu, Sarah Hwang, David Mount

40th International Symposium on Computational Geometry (SoCG) 2024

TL;DR: New Ipelets for the Ipe editor that draw and explore convex polygonal geometry—Funk/Hilbert metric balls, polar bodies, enclosing balls, MSTs, and polygon utilities (union, intersection, Minkowski sum).